Python tutorial to remove duplicate lines from a text file :

In this tutorial, we will learn how to remove all duplicate lines from a text file using Python. The program will first read the lines of an input text file and write the lines to one output text file. I will also show you how to handle exceptions if the path of a file is invalid.

Algorithm to remove duplicate lines from a file:

Suppose, the input file contains the below text:

First Line

Second Line

First Line

First Line

First LineOnce we remove all duplicate lines from this file, the output file will contain the following two lines:

First Line

Second LineThe idea is to remove the duplicates while writing the lines to the output file. For each line found, it will check if the line was written before. If any line is previously written, we will skip that line.

Python provides different inbuilt methods to deal with files. We need to open a file in read mode if we are only reading the content of the file. For writing content to a file, we need to open it in write mode.

Set:

We will use a set to keep the lines of the input file that we encounter. We can’t have duplicate items for a set and we can check if any line was previously added by looking at the set.

hashlib:

Instead of adding the lines, we can also add the MD5 hash value. MD5 is a cryptographic hash algorithm that converts any string to a 16 bytes fixed length string.

Algorithm:

The following algorithm will be used in the program:

- Open the input file in read mode because we are only reading the content of this file.

- Open the output file in write mode because we are writing content to this file.

- Read the content of the input file line by line. For each line, check if an equal line was already written to the output file or not.

- If not, then write this line to the output file, and save the md5 hash value of the line to a

set. As discussed before, we will check each line’s hash value instead of checking and storing the full line. For a large file, this approach is faster and space efficient. - If the hash value is already added to the set, skip that line.

- Once all lines of the input file are checked, the output file will contain all the contents of the input file without any duplicate lines.

Method 1: Python program to remove duplicate lines from a text (.txt) file:

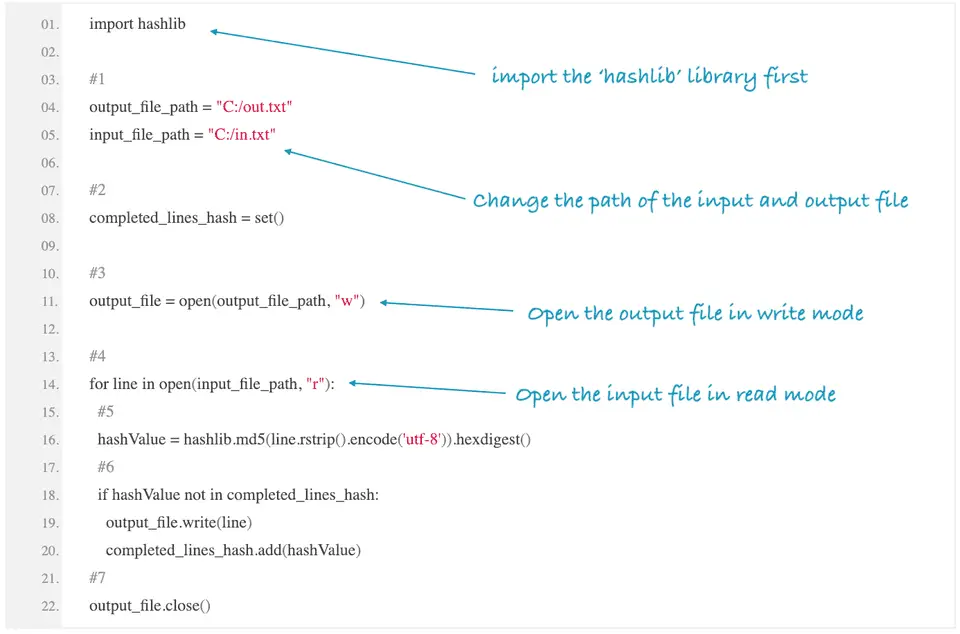

The following Python program will remove all duplicate lines of the input file in.txt and write it to the output file out.txt. Please update the variables output_file_path and input_file_path before running this program.

import hashlib

#1

output_file_path = "c:\\Users\\admin\\Desktop\\out.txt"

input_file_path = "c:\\Users\\admin\\Desktop\\in.txt"

#2

completed_lines_hash = set()

#3

input_file = open(input_file_path, "r")

output_file = open(output_file_path, "w")

#4

for line in input_file:

#5

hashValue = hashlib.md5(line.rstrip().encode('utf-8')).hexdigest()

#6

if hashValue not in completed_lines_hash:

output_file.write(line)

completed_lines_hash.add(hashValue)

#7

output_file.close()

input_file.close()Download it from Github

Explanation:

The commented numbers in the above program denote the step number below:

-

The input file path and output file paths are assigned to the

input_file_pathandoutput_file_pathvariables respectively. You need to change these values to your own input and output file paths. You can drag and drop one file on the terminal to find out the path. -

Create one

setvariablecompleted_lines_hashto keep the md5 hash value of each line of the input file. -

Open the input file in read mode and the output file in write mode. For opening a file in read and write mode,

randware used. We are opening the output file in write mode because we are going to write the content to this file. Theopen()method is used to open a file. -

Start one for loop to read the content of the input file line by line.

-

Find the md5 hash value of the current line. We are removing all the blank spaces and the new lines from the end of the line with the

rstrip()method before calculating the hash. Thehashlibmodule is used to find out the hash value of a line. -

Check if this hash value is already in the

setor not. If not, write the line to the output file and add the hash value to the Set variable. -

Finally, close the input and the output text files.

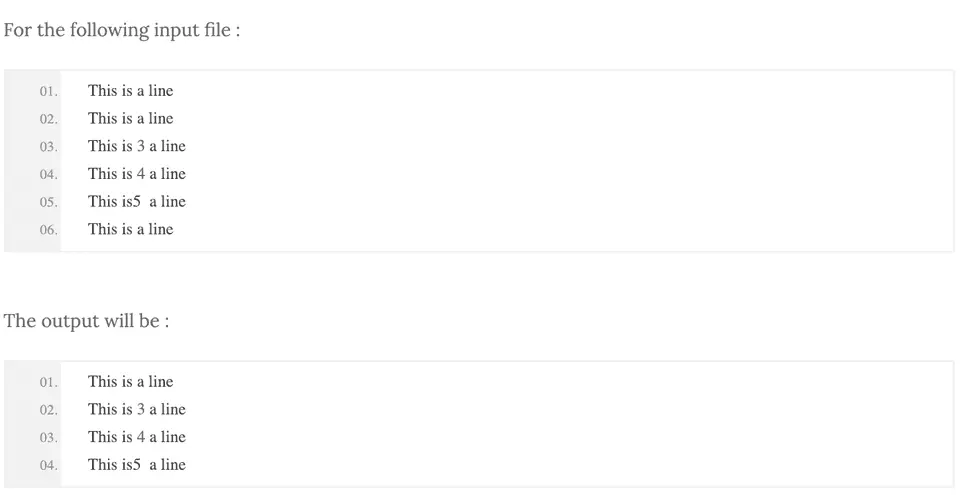

Sample Output :

Method 2: How to handle invalid paths:

The above program will throw an IOError if the input file is not found, or if the path is invalid. We can use a try-except block to handle the error.

We can also use a with statement to open the files. It is concise and it ensures that the file is closed at the end.

The following program uses try-except block and a with statement:

import hashlib

output_file_path = "c:\\Users\\admin\\Desktop\\out.txt"

input_file_path = "c:\\Users\\admin\\Desktop\\in.txt"

completed_lines_hash = set()

try:

with open(input_file_path, "r") as input_file, open(output_file_path, "w") as output_file:

for line in input_file:

hashValue = hashlib.md5(line.rstrip().encode('utf-8')).hexdigest()

if hashValue not in completed_lines_hash:

output_file.write(line)

completed_lines_hash.add(hashValue)

except IOError as e:

print(f"I/O error: {e.strerror}")For an invalid file path, it will print the following error:

I/O error: No such file or directoryIt will create one output file if the path is valid but the file doesn’t exist. Otherwise, it will throw a similar error.

Download it from Github

Conclusion :

I hope that you have found this article helpful. Try to run the program and please contact us if you have any queries.

Similar tutorials :

- Python 3 program to count the number of blank spaces in a file

- Python program to count the total number of lines in a file

- Python program to rename a directory or file

- Python program to delete all files with a specific extension in a folder

- Python program to rename a file or directory

- Python program to remove special characters from all files in a folder